Character Error Rate (CER) — The Standard Metric for Transcription Accuracy

CER is the most widely used metric for evaluating handwritten text recognition. It measures the percentage of characters that differ between an AI transcription and a human-verified reference — and it is the number reviewers, funders, and fellow researchers will ask you about.

How CER is calculated

The Character Error Rate measures the edit distance between the AI transcription and the ground truth, normalized by the length of the reference text.

S = substitutions, D = deletions, I = insertions, N = total characters in the reference text. A CER of 20.0% means 5 out of 25 characters differ.

Excellent

Publication-ready accuracy. Suitable for critical editions and scholarly work with minimal manual review.

Good

Suitable for most research workflows. Spot-check and correct key passages before publishing.

Needs review

Usable for keyword search and indexing. Consider training a custom model for better results.

How much Ground Truth do you need?

The amount of training data depends on your material, your target accuracy, and how many different hands you're dealing with.

Single-hand collections

For documents written by one person in a consistent hand, 15–30 pages of Ground Truth typically achieve good results (CER under 5%).

Multi-hand collections

Registers, court records, or correspondence with many writers need more diversity in training data — typically 50–100 pages across different hands.

Start with a public model

300+ pre-trained models are available. Start with one, evaluate its CER on your material, and only train a custom model if needed.

Iterative improvement

You don't need all Ground Truth upfront. Start with 15 pages, train, evaluate, add more pages where the model struggles, retrain.

Target CER depends on use case

Full-text search works well at 5–8% CER. Scholarly editions may need under 2%. Keyword spotting tolerates even 10–15%.

Quality over quantity

Accurate Ground Truth matters more than volume. 20 carefully corrected pages outperform 100 pages with errors in the reference.

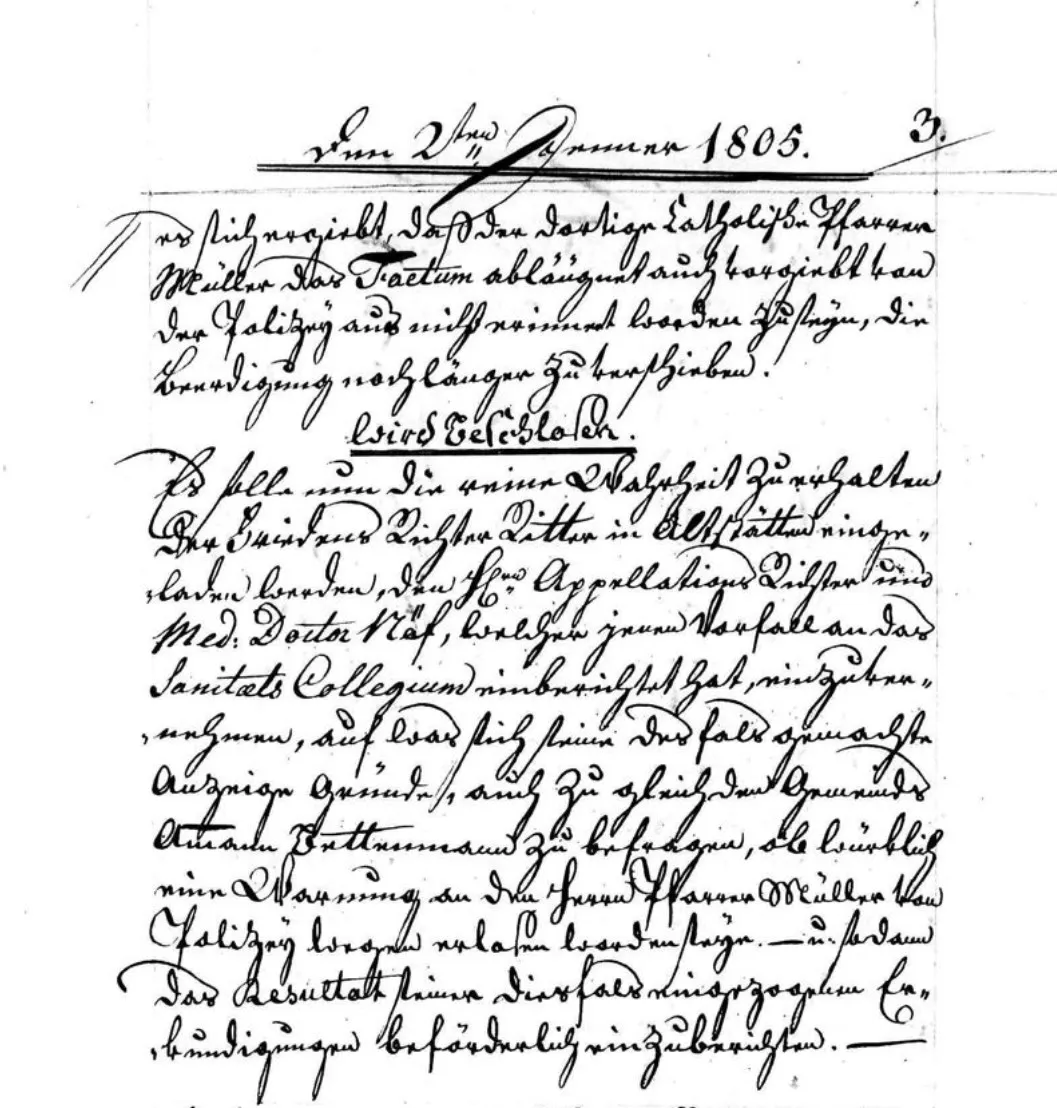

See how CER works — compare transcription quality at a glance

Each example below shows a Ground Truth line and the corresponding recognised text. Characters that differ are highlighted. The CER is calculated automatically from the Levenshtein edit distance.

Benchmarks

CER benchmarks across document types

Real-world CER values depend on the document type, script, and the model used. The table below compares typical results from Transkribus AI models against standard OCR engines.

| Feature | Transkribus HTR | Standard OCR |

|---|---|---|

| Printed modern text (post-1950) | 0.5–1% CER | 1–3% CER |

| Typewritten documents (1920s–1960s) | 1–3% CER | 3–8% CER |

| Handwritten 19th century | 2–5% CER | 15–30% CER |

| Kurrent / Sütterlin (18th–19th c.) | 3–8% CER | Fails |

| Medieval manuscripts | 5–15% CER | Fails |

Values are indicative ranges based on well-matched models. Actual CER depends on document condition, handwriting consistency, and model training data.

What affects CER

Six factors that determine how accurately your documents can be transcribed — and what you can do about each one.

Document quality

Faded ink, stains, bleed-through, and physical damage all introduce noise that makes characters harder to recognise. High-quality scans of well-preserved originals yield the best CER.

Script type

Modern cursive is easier to recognise than Kurrent, Sütterlin, or medieval book hands. The further the script is from modern letterforms, the more training data the model needs.

Model training data

A model trained on material similar to yours will dramatically outperform a generic one. Custom models trained on 50–100 pages of Ground Truth can cut CER by half or more.

Image resolution

Scans at 300 DPI or higher preserve fine details needed to distinguish similar-looking characters. Low-resolution images increase substitution errors significantly.

Layout complexity

Multi-column layouts, marginalia, tables, and interlinear annotations require accurate layout analysis. Errors in text region detection directly reduce effective CER.

Language

Languages with complex diacritics, non-Latin scripts, or extensive ligatures present additional challenges. Dedicated language-specific models typically achieve the best results.

Find the right model

Find the right model for your documents

Try Transkribus on your own documents

Create a free account and see what CER you can achieve on your material. Start with a public model or train your own.

50 free credits every month · No credit card required