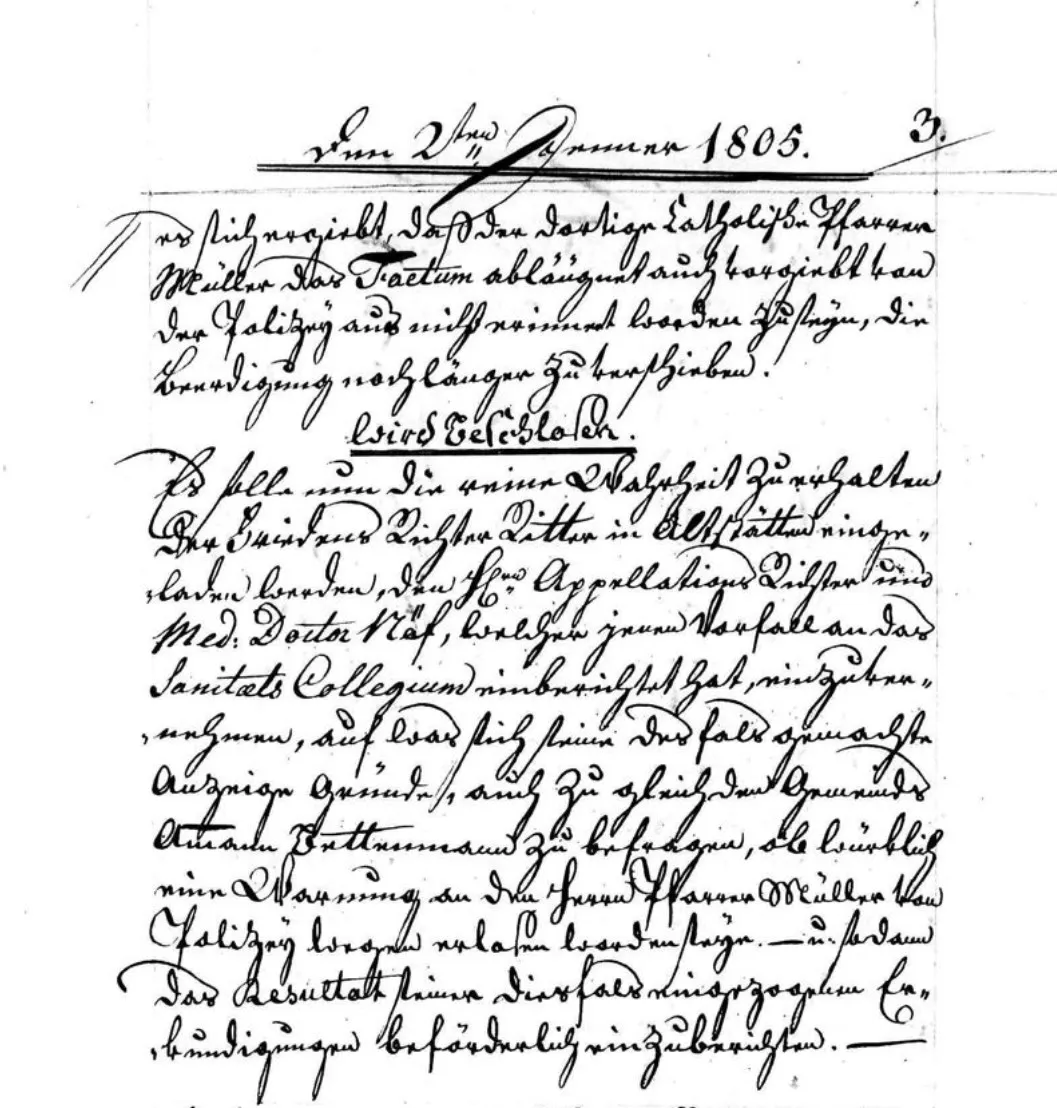

The problem

The Hidden Collections Crisis: Archive Digitization Backlogs Keep Growing

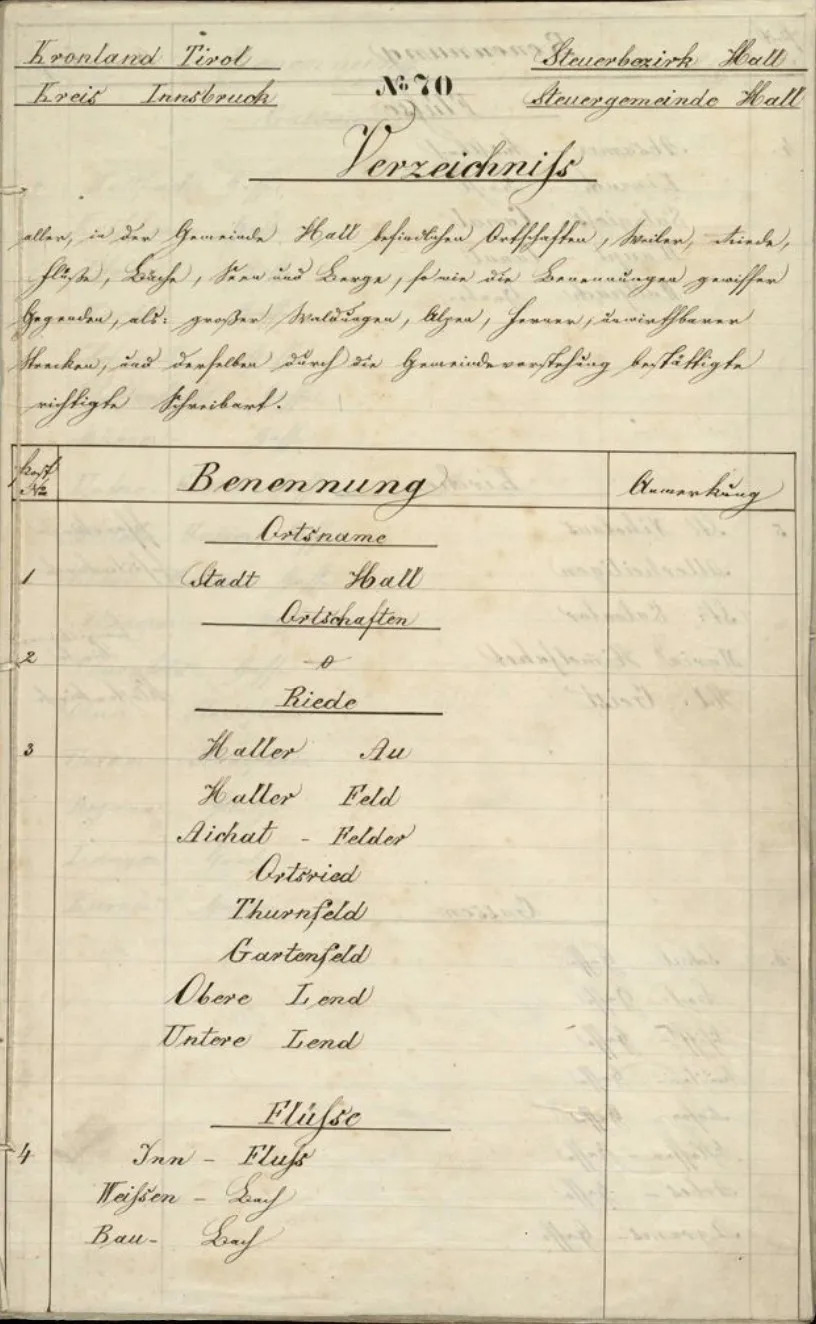

The solution

Reduce Archival Backlog with AI: From Unprocessed Boxes to Searchable Records

Comparison

AI-Assisted Processing vs. Manual Transcription for Archives

Archives face a fundamental throughput problem: millions of pages waiting to be catalogued, searchable, and accessible. Here is how AI-assisted processing compares to traditional manual workflows.

| Feature | Transkribus AI Processing | Manual Transcription |

|---|---|---|

| Throughput | Thousands of pages per day with batch processing — scales with collection size | A skilled transcriber processes 5–15 pages per day depending on difficulty |

| Cost per page | Fraction of a cent per page with credit-based pricing | Labour-intensive — costs accumulate linearly with every page |

| Consistency | Same model produces consistent output across thousands of pages | Quality varies between transcribers, fatigue, and interpretation differences |

| Searchability | Every processed page becomes full-text searchable immediately | Only transcribed pages are searchable — the backlog remains dark |

| Handling historical scripts | 300+ public models covering scripts from the 9th century to the present | Requires specialised paleography training — few staff have the necessary skills |

| Time to access | Collections become accessible within days or weeks of digitisation | Backlogs of years or decades are common in large institutions |

| Quality review | Confidence scores flag uncertain lines for targeted human review | Requires full proofreading of every transcription |

Comparison reflects typical institutional workflows. AI processing works best as a complement to human expertise — automated first pass with targeted manual review.

How to process an archival collection in 4 steps

Upload scanned collections

Upload entire series or fonds as multi-page PDFs, TIFFs, or image batches. Transkribus handles layout detection — columns, tables, marginalia — automatically.

Select an AI model

Choose from 300+ public models filtered by language, century, and script type. For mixed collections, run multiple models on different document groups within the same project.

Run batch recognition

Queue thousands of pages for processing. Transkribus runs text recognition in the background — no manual intervention required. Monitor progress from the dashboard.

Export and integrate

Export results as PAGE XML, ALTO XML, TEI-XML, plain text, or searchable PDF. Ingest directly into ArchivesSpace, AtoM, or publish via Transkribus Sites.

At scale

Automated Archival Processing with the Metagrapho API

import requests

API = "https://transkribus.eu/processing/v1"

TOKEN = "your-api-token"

# 1. Upload collection

upload = requests.post(f"{API}/uploads",

headers={"Authorization": f"Bearer {TOKEN}"},

json={"collectionId": 12345}

)

# 2. Start recognition on all pages

job = requests.post(f"{API}/processes",

headers={"Authorization": f"Bearer {TOKEN}"},

json={

"docId": upload.json()["docId"],

"htrId": 53042, # model ID

"pages": "all"

}

)

# 3. Poll for completion

status = requests.get(

f"{API}/processes/{job.json()['processId']}",

headers={"Authorization": f"Bearer {TOKEN}"}

).json()

print(f"Status: {status['state']}")Frequently Asked Questions

Related resources

More for archives and institutions

Ready to address your archival backlog?

Speak with our team about institutional plans for large-scale collection processing, or create a free account to evaluate Transkribus on your own materials.

Used by 2,000+ archives and libraries worldwide